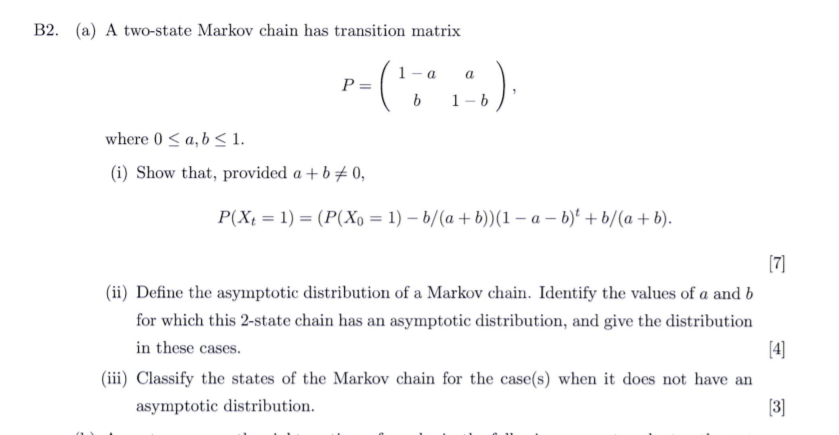

Consider the Two-state Markov Chain With the Following Transition Matrix

Regular Markov Chains and Steady States. A canonical reference on Markov chains is Norris 1997.

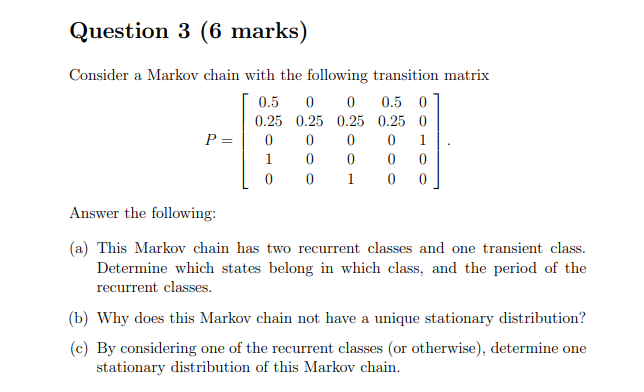

Solved Question 3 6 Marks Consider A Markov Chain With The Chegg Com

We will begin by discussing Markov chains.

. For example consider the following transition probabilities matrix for a 2-step Markov process. Example 49 Consider the Markov chain consisting of the three states 0 1 2 and having transition probability matrix It is easy to verify that this Markov chain is irreducible. 22 Consider the following transition diagram.

Geometrically a Markov chain is often represented as oriented graph on S possibly with self-loops with an oriented edge going from i to j whenever transition from i to j is possible ie Pij 0 and. The state transition matrix P has this nice property by which if you multiply it with itself k times then the matrix Pk represents the probability that system will be in state j after hopping through k number of transitions starting from state i. Thus all states in a Markov chain can be partitioned into disjoint classes If states i.

List the transient states the recurrent states and the periodic states. Classes form a partition of states. B Show that this Markov chain is regular.

Is the stationary distribution a limiting distribution for the chain. Consider a Markov chain with the following transition probability matrix P. Be the transition matrix of a Markov chain.

B Show that this Markov chain is regular. Li S References Mailings Review View Help EndNote X9 14 Normal No Spacing Heading 1 三三三 A A Paragraph Styles 2 5 INEN435 Stochastic Operations Research Homework 3 Problem 3 Consider the Markov chain with three states S123 that has the following transition matrix 12 14 141 P 13 12 12 23 0. It is the most important tool for analysing Markov chains.

1 Consider a Markov chain with state space S 1 2 3 4 with the transition matrix 0 0 14 34 12 0 0 12 0 13 0 23 0 0 1 0 a Find the. Then use your calculator to calculate the nth power of this one-. If it starts at B it stays at B with probability 1 5 and moves to A with probability 4 5.

We borrow an idea used in Wang and Buihler 1991 by looking at the Markov chain Xi through its sojourn time in the state space. First write down the one-step transition probability matrix. A Markov chain or Markov process is a stochastic model describing a sequence of possible events in which the probability of each event depends only on the state attained in the previous event.

Consider the Markov chain with the state-transition diagram shown in Figure 1222. A Draw the transition diagram that corresponds to this transition matrix. P 8 0 22 7 13 3 4.

Consider a continuous-time Markov chain αε t with a countable state space N 1 2. A Regular chain is defined below. A Markov Chain has two states A and B and the following probabilities.

Is this chain irreducible. C Find the long-term probability distribution for the state of the Markov chain. The first column represents state of eating at home the second column represents state of eating at the Chinese restaurant the.

Identify the members of each chain of recurrent states. Markov Chains - 16 How to use C-K Equations To answer the following question. Is this chain aperiodic.

To achieve such an approximation one of the approaches is to model the underlying systems with the help of singular perturbation theory see eg 2 resulting in a two-time-scale formulation leading to the use of singularly perturbed Markov chains. Denote by T the length of the ith sojourn in state 0 and by Zi the length of the ith sojourn in state 1. Give the transition probability matrix of the process.

Two states that communciate are said to be in the same class. In Lectures 2 3 we will discuss discrete-time Markov chains and Lecture 4 will cover continuous-time Markov chains. Another special property of Markov chains concerns only so-called regular Markov chains.

For example it is possible to go from state 0 to state 2 since That is one way of getting from state 0 to state 2 is to go from state 0 to. C Find the long-term probability distribution for the state of the Markov chain. A Markov chain is irreducible if there is only one class.

For finite state space the transition matrix needs to satisfy only two properties for the Markov chain to converge. Define to be the probability of the system to be in state after it was in state j at any observation. Classification of States We say that a state j is accessible from state i i j if Pn ij 0 for some n 0.

A countably infinite sequence in which the chain moves state at discrete time steps gives a discrete-time Markov chain DTMC. Transition Matrix list all states X t list all states z X t1 insert probabilities p ij rows add to 1 rows add to 1 The transition matrix is usually given the symbol P p ij. Different classes do NOT overlap.

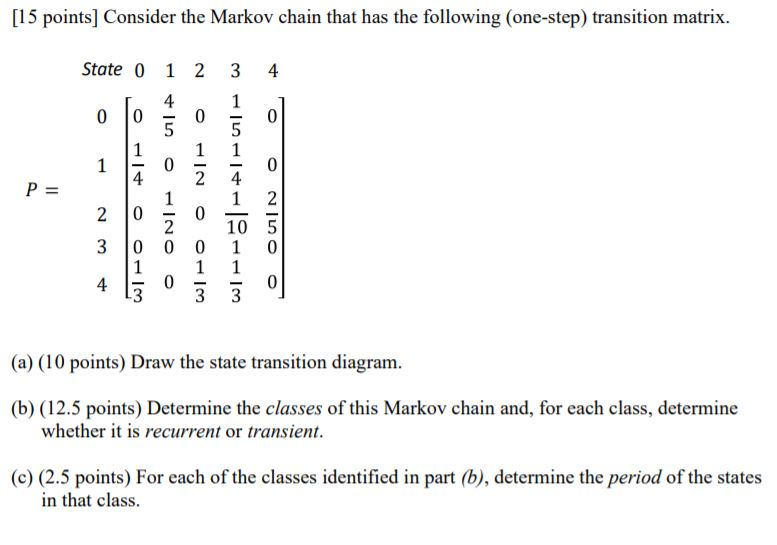

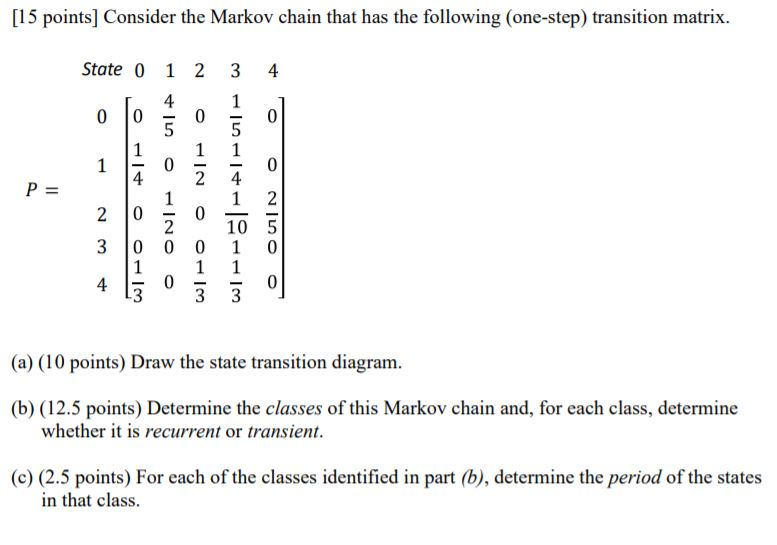

A class is a subset of states that communicate with each other. Draw the state transition. 21 Setup and definitions We consider a discrete-time discrete space stochastic process which we write as Xt X t for t.

Note that the columns and rows are ordered. A Draw the transition diagram that corresponds to this transition matrix. Figure 1120 - A state transition diagram.

Markov Chains - 7 State Classes Two states are said to be in the same class if the two states communicate with each other that is i j then i and j are in same class. Consider the Markov chain with transition proba. Find the stationary distribution for this chain.

In the transition matrix P. We shall follow the convention of calling a first-order Markov chain simply a Markov chain. 10 A B 025 C 05 025 05 05 a Find the.

Consider the Markov chain shown in Figure 1120. P Two of the ways we have discussed of finding the long-run average proportion of time spent in each state are a solve the eigenvalue problem qP q where q is the equilibrium vector and b multiply P by itself many times until it converges. CLASSIFICATION OF STATES 151 13 Markov Chains.

This means that there is a possibility of reaching j from i in some. Two states are said to communicate if it is possible to go from one to another in a finite. So transition matrix for example above is.

The matrix describing the Markov chain is called the transition matrix. We first form a Markov chain with state space S HDY and the following transition probability matrix. What is the probability that starting in state i the Markov chain will be in state j after n steps.

Be the transition matrix of a Markov chain. Consider the two-stage Markov chain with the following state transition probability matrix. first H then D then Y.

A continuous-time process is called a continuous-time. Stochastic matrix one can construct a Markov chain with the same transition matrix by using the entries as transition probabilities. Consider the following transition diagram.

A Regular Transition Matrix and Markov Chain A transition matrix T is a regular transition matrix if for some k if k T has no zero entries. If it starts at A it stays at A with probability 1 3 and moves to B with probability 2 3. The matrix is called the Transition matrix of the Markov Chain.

10 A B 025 C 05 025 05 05 a Find the. The ijth entry of the matrix Pn gives the probability that the Markov chain starting in state iwill be in state jafter.

B2 A A Two State Markov Chain Has Transition Chegg Com

Solved 15 Points Consider The Markov Chain That Has The Chegg Com

Example Of A Two State Markov Chain Download Scientific Diagram

Comments

Post a Comment